KEY POINTS

-

Exercise-nutrient-interactions promote or inhibit the activities of a number of cell signaling pathways and can modulate training adaptation.

-

Manipulating carbohydrate (CHO) availability is common practice for athletes training for endurance-based sports.

-

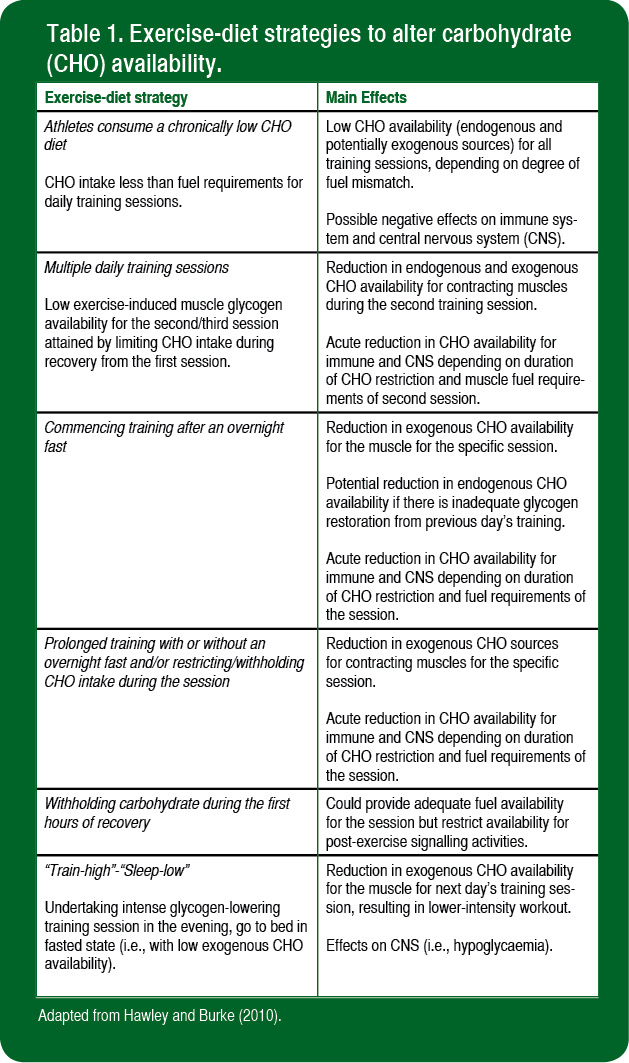

Low CHO availability can be achieved by consuming a chronically low CHO diet; twice-a-day training sessions in which CHO is withheld between workouts, overnight fasting, prolonged training and restricting or postponing CHO intake during the session, or delaying CHO intake during recovery from endurance training.

-

Independent of prior training status, short-term training programs in which up to half of the prescribed workouts are started with either low muscle glycogen levels and/or low CHO availability augment training adaptations to the same or to a greater extent than when similar workouts are undertaken with normal glycogen stores.

-

There is no clear evidence that current “train-low” strategies enhance the capacity to undertake high-intensity training or improve athletic performance.

INTRODUCTION

Physical work capacity and carbohydrate (CHO) availability are highly interrelated, and it is currently accepted that optimal adaptation to the demands of repeated endurance-based training sessions requires a diet that can replenish muscle energy reserves on a daily basis. Accordingly, exercise physiologists and sport nutritionists typically recommend that when training for events in which CHO-based fuels (i.e., muscle and liver glycogen, blood glucose, muscle and liver lactate) are the most heavily metabolized, athletes should consume a diet high in CHO (Burke et al., 2011). While the premise that high CHO availability promotes the optimal training response has gained acceptance, it should be noted that few studies have systematically manipulated dietary CHO intake in well-trained athletes throughout a competitive season and examined the effect on training responses/adaptations and performance. Furthermore, the premise that high CHO availability is essential to promote a superior training response presupposes that a surplus rather than a lack of substrate is the main “driver” for skeletal muscle remodelling and adaptation. In this regard, Chakravarthy and Booth (2004) proposed that a “cycling” of muscle glycogen stores may be desirable to promote the optimal training response/adaptation. Hansen and colleagues (2005) were the first to propose that deliberately commencing selected training sessions with low muscle glycogen concentrations would improve the training adaptation to a greater magnitude than training with normal (or high) glycogen availability. This review provides a synopsis of how training response adaptations can be modified by CHO availability. Various strategies for altering CHO content are discussed and the results of contemporary studies that have determined the effects of manipulating CHO availability on endurance training adaptation and exercise capacity are examined. Comprehensive reviews of these issues can be found elsewhere (Hawley & Burke, 2010; Hawley et al., 2011; Hawley & Morton, 2014; Philp et al., 2012).

DIETARY MODULATION OF TRAINING ADAPTATION: “TRAINING-LOW”

During the past decade, advances in molecular biology have allowed exercise scientists to determine how endurance-based training programs promote major adaptations in skeletal muscle that result in mitochondrial biogenesis and a concomitant increase in exercise capacity. As a result of a greater understanding of the molecular bases of training adaptation, recent interest has focused on how nutrient availability might modify the regulation of many of the contraction-induced events in muscle in response to endurance-based exercise (Hawley et al., 2011). Nutrient-gene and nutrient-protein interactions can promote or inhibit the activities of a number of cell signaling pathways and, thereby, have the potential to modulate training adaptation and subsequent performance capacity.

Hansen et al. (2005) first hypothesized that commencing endurance-based exercise with low glycogen availability would promote a greater training adaptation compared to when the same workouts were undertaken with normal muscle glycogen stores. Such a notion seems to conflict with the longstanding belief that athletes undertaking prolonged, intense endurance training programs should consume a high CHO diet at all times. However, there have been subtle amendments to the sports nutrition guidelines regarding CHO intake in the athlete’s daily diet (Burke, 2010). Rather than promoting a high CHO intake for all athletes, current guidelines promote a sliding scale of CHO intake with the objective of matching the estimated fuel costs of the athlete’s training and recovery (Burke, 2010; Burke et al., 2011). This recommendation is underpinned by the rationale that prolonged, intense training sessions should still be undertaken with high CHO availability but that some sessions (low-intensity, skill-based) may be undertaken with lower fuel supplies from muscle glycogen and other CHO-based fuels. In reality, it is unlikely that competitive endurance athletes commence every training session with high CHO availability. However, the move away from a “blanket” recommendation of a high CHO intake at all times has also created misunderstanding amongst some coaches and athletes. “Train-low” has now become a common catchphrase in both athletic circles and the scientific literature used to describe a host of (different) practices or as a generic or “one-size-fits-all” theme promoted as a replacement to the era of the “high CHO diet” in sport. There are several ways of achieving low CHO availability before, during and after training sessions which differ in the site of low CHO status (i.e., endogenous glycogen versus exogenous or blood glucose availability), in the duration of exposure to an exercise-diet intervention, the number of tissues affected (i.e., muscle, liver) as well as the frequency and timing of their incorporation into an athlete’s periodised training program (Table 1).

As noted, the original study that can lay claim to the term “train low” and indeed, the first modern investigation of the effects of reducing muscle glycogen availability on training adaptation and performance was undertaken by Hansen et al. (2005). These workers studied seven untrained males who completed a rigorous training program of leg/knee extensor “kicking” exercise 5 d/wk for 10 wk. Both of the subjects’ legs were trained according to a different schedule, but the total amount of work undertaken by each leg was the same: one leg trained twice a day, every second day (LOW), in which the second training session commenced with low glycogen content, whereas the other leg trained daily (HIGH) under conditions of high glycogen availability. Muscle biopsies taken from both legs before and after the training regimen revealed that resting muscle glycogen content in both legs was similar pre-intervention but was increased in the leg that trained LOW after 10 wk. There was a training-induced increase in the maximal activities of citrate synthase and b-hydroxyacyl-CoA dehydrogenase (b-HAD) in both legs, but the magnitude of increase was greater in LOW than HIGH. Exercise performance (i.e., the time to exhaustion during single-leg kicking at 90% of post-training maximal power output) was twice as long for LOW as HIGH after training. The results of Hansen et al. (2005) clearly demonstrated that in previously untrained individuals, adaptation is augmented by commencing a portion (50%) of training sessions with low glycogen availability, at least for the first 10 wk of a short-term training intervention. While the findings (Hansen et al., 2005) were intriguing and received much airplay in the lay press, it was not clear if athletes with a history of endurance training would attain the same benefit as untrained, less fit individuals embarking on a fitness regimen and training with low muscle glycogen availability. Furthermore, the training load in the study was “clamped” such that both LOW and HIGH legs trained at the same intensity: the LOW leg therefore set the “upper limit” for the workload to be undertaken by the HIGH leg. In a “real world” setting, an athlete would produce greater power outputs or speeds when performing intense endurance-based training when glycogen availability was high. Finally, it was uncertain how improvements in one-legged “kicking” time to exhaustion translated (if at all) to dynamic whole-body cycling or running.

Yeo et al. (2008) recruited male competitive cyclists or triathletes with a background of endurance training (>3 yr) and divided the athletes into two groups matched for age, peak oxygen uptake [VO2peak] and training history. Athletes completed three weeks of supervised training. One group of athletes trained 6 days/wk with one rest day, alternating between 100-min steady-state aerobic training rides (AT; ~70-75% of VO2 peak) on the first day and high-intensity interval training (HIT; 8 x 5-min work bouts at each athlete’s maximal self-selected power output, with 1-min recovery between bouts) the next day (HIGH). The AT and HIT sessions were deliberately chosen as these workouts deplete ~50% of resting muscle glycogen stores in well nourished, trained athletes. The other group (LOW) trained twice each day, every second day, performing the AT in the morning (to decrease muscle glycogen content by ~ 50%), followed by 1–2 h of rest with no energy (i.e., CHO) intake, and then performed HIT at their maximal self-selected intensity. Accordingly, HIGH completed all HIT sessions at a time when muscle glycogen levels were restored, whereas LOW did the HIT sessions at a time when muscle glycogen was 50% depleted.

The novel findings were that in skeletal muscle of already well-trained individuals, resting muscle glycogen content, the levels of several enzymes with roles in mitochondrial biogenesis and lipid metabolism (i.e., citrate synthase, β-HAD, the electron transport chain component cytochrome oxidase subunit IV), and rates of whole-body fat oxidation during submaximal exercise were enhanced to a greater extent by training twice every second day compared with training daily after the short-term intervention (Yeo et al., 2008). Remarkably, these adaptations were attained despite the observation that maximal self-selected power output was significantly lower (~8%) for the first six HIT sessions for athletes who commenced these workouts with low muscle glycogen content (i.e., the first 2 wk of the training program). In other words, even with a lower training “impulse” to the working muscles, training LOW augmented markers of training adaptation to a greater extent than when all workouts were commenced with high glycogen availability. The greater increases in markers of muscle adaptation found by Yeo et al. (2008) were in close agreement with the earlier findings of Hansen et al. (2005). However, while Hansen et al. (2005) observed a dramatic increase in exercise “performance” after training low, Yeo et al. (2008) found that power output during a 60 min cycle time trial was enhanced by the same magnitude (~11%) whether athletes trained HIGH or LOW. The “disconnect” between some of the “mechanistic” markers of training adaptation and athletic performance outcomes is discussed below.

DOES CAFFEINE “RESCUE” THE LOW POWER OUTPUTS ASSOCIATED WITH LOW CARBOHYDRATE AVAILABILITY?

In the study of Yeo et al. (2008), the substantially lower power outputs attained when commencing HIT with low compared to high glycogen availability was coupled with a significant increase in the athletes’ ratings of perceived exertion. In reality, it is unlikely that any competitive athlete would embark on an exercise-diet regimen that would reduce their capacity to perform high-intensity endurance exercise while also feeling poorly. One ergogenic aid known to reduce an individual’s perception of effort during exercise, while simultaneously enhancing exercise capacity, is caffeine. Consequently, Lane et al. (2013) determined whether a low dose of caffeine could “rescue” the reduction in maximal self-selected power output observed when individuals commenced HIT with low vs. normal glycogen availability. In their study, 12 well-trained cyclists or triathletes performed four experimental trials in which muscle glycogen content was manipulated via exercise-diet interventions so that two trials were commenced with LOW and two with HIGH muscle glycogen availability (Lane et al., 2013). Before all experimental trials, subjects ingested caffeine (3 mg/kg body mass (BM)) or placebo. Athletes were instructed to produce their maximal self-selected power output during a standardized HIT session (described above). In agreement with the earlier findings of Yeo et al. (2008), commencing HIT with low glycogen availability reduced self-selected maximal power output by ~8% compared with HIGH. Caffeine enhanced power output independently of muscle glycogen concentration (by 2.8% and 3.5% for LOW and NORM, respectively) but could not fully restore power output to the same levels as when subjects commenced exercise with HIGH glycogen availability.

EFFECTS OF “TRAINING-HIGH, SLEEPING LOW”

The original “train-low” protocol advocated twice-a-day training sessions in which only the second exercise session was undertaken with low glycogen availability (Hansen et al., 2005). As discussed, a direct outcome of this strategy was that the maximal self-selected training intensity of the second session was substantially reduced when it was commenced with low, compared to normal (or elevated), glycogen levels (Hulston et al., 2010; Yeo et al., 2008). Such an outcome is counterintuitive for the preparation of competitive athletes where high-intensity workouts are a critical component of any periodized training program (Hawley 2013). An alternative approach to prolong the duration of low CHO availability and potentially enhance and extend the time course of transcriptional activation of metabolic genes and their target proteins while simultaneously conserving the training “impulse” to the working muscles (see Figure 1), is to have an athlete train-high and then sleep-low (“train-high, sleep-low”). In this model, an athlete would commence a HIT session in the evening with high glycogen availability, then go to bed fasted, before undertaking a subsequent prolonged, submaximal training session the next morning and then re-feeding. Delaying energy (i.e., CHO) intake and extending the duration, an individual in a low glycogen state may augment the exercise diet-induced adaptation process by delaying the resynthesis of muscle (and liver) glycogen and up-regulating several key metabolic signaling pathways involved in mitochondrial biogenesis and lipid metabolism, compared to when individuals followed sports nutrition guidelines (i.e., high post-exercise CHO availability). Currently, several laboratories are undertaking studies using various modifications of the “train-high, sleep-low” model (i.e., delaying post-exercise evening CHO intake, replacing CHO-based meals with high protein foods, fasting overnight, etc.), and athletes, coaches and sport scientists await the results from the investigations with interest.

Of course, it could well be that the disturbances in the cellular environment induced by starting training sessions with low glycogen availability promote enhanced signaling and underpin the superior adaptation process. As such, attempts to minimize or alleviate such conditions would negate the benefits associated with low-glycogen training. Either way, the results from Hansen et al. (2005) and Yeo et al. (2008) demonstrate that independent of prior training status, short-term (3-10 wk) training in which a portion (~50%) of sessions are commenced with low muscle glycogen levels promotes training adaptations (i.e., increases the activities of enzymes involved in energy metabolism and mitochondrial biogenesis) to a greater extent than when all workouts are undertaken with normal or elevated glycogen stores. However, despite creating conditions that should, in theory, enhance exercise capacity, the effects of this train-low strategy on a range of performance measures were equivocal (discussed below).

ALTERING EXOGENOUS CARBOHYDRATE AVAILABILITY

Manipulating endogenous muscle (and liver) glycogen stores is not the only way to alter CHO availability before, during or after training (Table 1). Another strategy to alter CHO availability is to alter the exogenous or blood supply of glucose. Akerstrom et al. (2009) studied the effects of altered exogenous glucose availability during a 10-wk program of single-leg knee-extensor training. Male subjects trained one leg while ingesting a 6 g/100 mL glucose solution (for an intake of 0.7 g CHO/kg BM/h) while ingesting a placebo when training the other leg. Training consisted of 2 h of submaximal “kicking” with each leg being trained on alternate days. While there were training-induced increases in the maximal activities of both oxidative and lipolytic enzymes (citrate synthase and β-HAD), tracer-derived measures of lipid turnover and exercise capacity in both legs, the magnitude of improvement was similar and independent of exogenous CHO availability. De Bock et al. (2008) also investigated whether muscle adaptation to exercise was affected by nutritional status during training sessions. Recreationally fit males undertook a 6-wk training program comprising 1-2 h/day cycling at 75% of VO2peak for 3 days/wk, during which workouts were started in either a fasted state or 90 min after a CHO-rich breakfast and additional CHO supplementation (1 g/kg BM/hr) throughout exercise. In agreement with the results of Akerstrom et al. (2009), a variety of metabolic markers (including succinate dehydrogenase activity, GLUT-4 and hexokinase II content) were increased by a similar extent with or without CHO supplementation. Despite a significant increase in fatty acid binding protein after “fasted” training, rates of fat oxidation during submaximal exercise were not altered by either training intervention. The results from these studies suggest that the major adaptations to endurance training are not augmented by reduced exogenous CHO availability, at least in moderately fit individuals (Akerstrom et al., 2009; De Bock et al., 2008). Contrasting results were reported by Nybo and colleagues (2009) who determined the effects of 8-wk endurance training in previously untrained males who consumed either a sweetened placebo during workouts (low CHO availability) or received a 10% CHO solution (high CHO availability). They found that undertaking training without exogenous CHO elicited a greater enhancement of the increases in resting muscle glycogen, GLUT-4 and β-HAD. However, these adaptations did not translate to beneficial functional outcomes (i.e., performance).

Withholding CHO during training may also have some negative effects on performance outcomes.Cox et al. (2010) determined the effects of undertaking strenuous daily endurance training with either high or low CHO availability during a month-long training block. During the intervention, 16 endurance-trained athletes were fed a standard moderate-CHO diet. Half the athletes were randomly allocated to a high-CHO intake group (HICHO) and consumed a CHO solution (10% glucose solution that provided an additional 25 kJ/kg BM of CHO/hr of training), while the remainder (LOCHO) were fed a placebo during training and ingested energy-matched fat- and protein-rich snacks after training sessions. While there were no clear effects of either training-diet intervention on several metabolic parameters and exercise performance, exogenous skeletal muscle glucose oxidation during exercise after the intervention period was only increased in athletes who trained with CHO (14% vs. 1%). For the competitive athlete, any impairment in the ability to oxidize ingested CHO would be a major disadvantage in any endurance-based event.

To date, only one study has examined the combined effects of manipulating endogenous muscle glycogen and exogenous glucose availability on training adaptation. In that investigation, recreationally active subjects undertook a variety of different training protocols (training twice daily for 2 days/wk or training daily for 4 days/wk) and dietary manipulations (with or without CHO before and during exercise) during a 6-wk intervention (Morton et al., 2009). There was a training-induced increase in the protein content of several markers of muscle oxidative capacity but, apart from a single enzyme, no differences in the magnitude of change between groups who trained with low vs. high CHO availability. Performance during high-intensity intermittent exercise was not enhanced to a greater extent by altering CHO availability.

SKELETAL MUSCLE ADAPTATIONS VERSUS PERFORMANCE: A MISMATCH

A common and recurrent theme when examining studies that have manipulated CHO availability and its effect on training adaptation and performance is the discrepancy between changes in many of the cellular “mechanistic” variables measured in skeletal muscle and whole body functional outcomes. There are many potential reasons to explain this “mismatch,” and it is probably a combination of several factors that underlie such a disparity. First, a direct relationship between athletic performance and many of the training-induced changes in cellular events that occur in muscle in response to the various “train-low” strategies may not exist. Indeed, just because molecular techniques now exist to detect and measure a vast array of cellular “candidate markers,” this does not mean they have a functional role in explaining performance variability. Indeed, in some instances, the technical variability of various enzymatic/protein assays and/or gene measurements greatly exceeds the small biological changes that manifest as improvements in performance. Second, highly trained athletes are likely to have already maximized many adaptations in the muscle and further increases in selected proteins may only play a permissive role (if any) in promoting the capacity for exercise. Indeed, the absolute levels of muscle proteins with roles in mitochondrial biogenesis and/or substrate transport, uptake and oxidation are not likely, in and of themselves, to be rate-limiting for athletic performance. Skeletal muscle functional capacity is, of course, only one determinant of athletic performance, which typically involves the integration of whole body systems including the cardiovascular, endocrine and central nervous systems.

A third line of reasoning to explain the disconnect between the lack of enhancement in performance outcomes and increases in various muscle markers of adaptation is that we lack the appropriate tools to accurately measure sports performance in the laboratory. Many endurance races are won by very small margins (< 1% usually separates the top three athletes), and currently, exercise scientists lack the ability to detect these small changes that are worthwhile to a competitive athlete in order to change the outcomes of real world events. A fourth possibility is that some “train-low” strategies may have negative effects on parameters related to an athlete’s performance that either acutely, or over the long-term, counteracts any positive effects achieved on isolated muscle characteristics. For example, rates of ingested CHO oxidation by muscle are reduced in athletes who “train-low” compared to those who train with high exogenous CHO availability (Cox et al., 2010), while the substantial increases in rates of whole-body (Yeo et al., 2008) and muscle (Hulston et al., 2010) fat oxidation observed after training with low glycogen may not improve endurance events where there is a reliance on CHO-based fuels. An indirect outcome of dietary periodization is that it may reduce the training stimulus, especially when athletes commence high-intensity workouts with low muscle glycogen availability. A common finding when training sessions are undertaken with low CHO availability is that subjects frequently chose a lower workload or intensity because they perceived the effort to be higher, at least in their initial exposure to training low (Yeo et al., 2008). Interference with such sessions is likely to impair other adaptations to training such as muscle fibre recruitment and patterns of substrate utilization. Finally, studies that have investigated various “train-low” strategies have only been undertaken for short periods, with little or no consideration given to integrate experimental interventions into the athlete’s competitive periodized training cycle. Prior to embarking on lab-based investigations of “train-low,” it should be clarified whether successful athletes have already refined optimal nutrient-training protocols that enhance endurance performance.

PUTTING IT INTO PRACTICE: CONCLUSIONS AND FUTURE STUDIES

Investigations that have manipulated CHO availability demonstrate that independent of prior training status, short-term (3-10 wk) training programs in which up to half of all prescribed workouts are commenced with either low muscle glycogen levels and/or low exogenous CHO availability augment training adaptation to the same or to a greater extent than when similar workouts are undertaken with normal glycogen stores (for review see Hawley & Burke, 2010; Hawley et al., 2011). Certainly, there are no instances of training adaptation or performance being impaired by undertaking a period of training with lower CHO availability. Yet, despite increasing the muscle adaptive response while concomitantly reducing the reliance on CHO-based fuels during submaximal exercise, there is no clear evidence that these strategies enhance either the ability to train at higher work-rates or speeds nor improve exercise performance. Whether deliberately or unplanned (i.e., a failure to consume adequate CHO between workouts), competitive endurance athletes certainly commence some of their training with what might be considered “sub-optimal” CHO reserves. Hence, when these athletes participate in studies that typically replace a handful of prescribed workouts with “train-low” sessions, it is hardly surprising that training capacity and performance are unchanged: the study design has merely replicated what athletes are already doing in real life.

An important consideration when discussing alternate exercise-diet interventions, and often overlooked by both exercise physiologists and sport nutritionists, is that we currently lack valid data on the actual fuel costs of endurance training sessions. It seems somewhat irrelevant to discuss the degree of glycogen depletion or restricted CHO availability that is needed to potentiate the effect of a training stimulus or the length of time that periodic low-CHO training needs to be undertaken to demonstrate functional changes to training and/or performance outcomes, when we have no idea of the CHO demands of training sessions in the first place. It is clear that studies on long-term dietary periodization strategies, especially those mimicking real-life athletic practices, are urgently needed before we can truly assess the effects of various “train-low” strategies on training adaptation and athletic performance.

REFERENCES

Akerstrom, T.C., C.P. Fischer, P. Plomgaard, C. Thomsen, G. van Hall, and B.K. Pedersen (2009). Glucose ingestion during endurance training does not alter adaptation. J. Appl. Physiol. 106:1771-1779.

Burke, L.M. (2010). Fueling strategies to optimize performance: training high or training low? Scand. J. Med. Sci. Sports 20 (Suppl) 2:48-58.

Burke, L.M., J.A. Hawley, S.H. Wong, and A.E. Jeukendrup (2011). Carbohydrates for training and competition. J. Sports Sci. 29 Suppl 1:S17-S27.

Chakravarthy, M.V., and F.W. Booth (2004). Eating, exercise, and "thrifty" genotypes: connecting the dots toward an evolutionary understanding of modern chronic diseases. J. Appl. Physiol. 96:3-10.

Cox, G.R., S.A. Clark, A.J. Cox, S.L. Halson, M. Hargreaves, J.A. Hawley, N. Jeacocke, R.J. Snow, W.K. Yeo, and L.M. Burke (2010). Daily training with high carbohydrate availability increases exogenous carbohydrate oxidation during endurance cycling. J. Appl. Physiol. 109:126-134.

De Bock K, W. Derave, B.O. Eijnde, M.K. Hesselink, E. Koninckx, A.J. Rose, P. Schrauwen, A. Bonen, E.A. Richter, and P. Hespel (2008). Effect of training in the fasted state on metabolic responses during exercise with carbohydrate intake. J. Appl. Physiol. 104:1045-1055.

Hansen, A.K., C.P. Fischer, P. Plomgaard, J.L. Andersen, B. Saltin, and B.K. Pedersen (2005). Skeletal muscle adaptation: training twice every second day vs. training once daily. J. Appl. Physiol. 98:93-99.

Hawley J.A. (2013). Nutritional strategies to modulate the adaptive response to endurance training. Nestle Nutr. Inst. Workshop Ser. 75:1-14.

Hawley, J.A., and L.M. Burke (2010). Carbohydrate availability and training adaptation: Effects on cell metabolism. Exerc. Sport Sci. Rev. 38:152-60.

Hawley, J.A., and J.P. Morton (2014). Ramping up the signal: promoting endurance training adaptation in skeletal muscle by nutritional manipulation. Clin. Exp. Physiol. Pharmacol (in press).

Hawley, J.A., L.M. Burke, S.M. Phillips, and L.L. Spriet (2011). Nutritional modulation of training-induced skeletal muscle adaptations. J. Appl. Physiol. 110:834-845.

Hulston, C.J., M.C. Venables, C.H. Mann, C. Martin, A. Philp, K. Baar, and A.E. Jeukendrup (2010). Training with low muscle glycogen enhances fat metabolism in well-trained cyclists. Med. Sci. Sports Exerc. 42:2046-2055.

Lane, S.C., J.L. Areta, S.R. Bird, V.G. Coffey, L.M. Burke, B. Desbrow, L.G. Karagounis, and J.A. Hawley (2013). Caffeine ingestion and cycling power output in a low or normal muscle glycogen state. Med. Sci. Sports Exerc. 45:1577-84.

Morton J.P., L. Croft, J.D. Bartlett, D.P. MacLaren, T. Reilly, L. Evans, A. McArdle, and B. Drust (2009). Reduced carbohydrate availability does not modulate training-induced heat shock protein adaptations but does upregulate oxidative enzyme activity in human skeletal muscle. J. Appl. Physiol. 106:1513-1521.

Nybo L., K. Pedersen, B. Christensen, P. Aagaard, N. Brandt, and B. Kiens (2009). Impact of carbohydrate supplementation during endurance training on glycogen storage and performance. Acta Physiol. 197:117-127.

Philp, A., M. Hargreaves, and K. Baar (2012). More than a store: regulatory roles for glycogen in skeletal muscle adaptation to exercise. Am. J. Physiol. 302:E1343-E1351.

Yeo, W.K., C.D. Paton, A.P. Garnham, L.M. Burke, A.L. Carey, and J.A. Hawley. (2008). Skeletal muscle adaptation and performance responses to once a day versus twice every second day endurance training regimens. J. Appl. Physiol. 105:1462-1470.